Introduction

As AI agents transition from demos and prototypes into real production systems, one uncomfortable truth keeps surfacing: most AI agents fail not because they lack intelligence, but because they lack planning. Without structure, agents hallucinate, drift from goals, misuse tools, and collapse under real-world complexity.

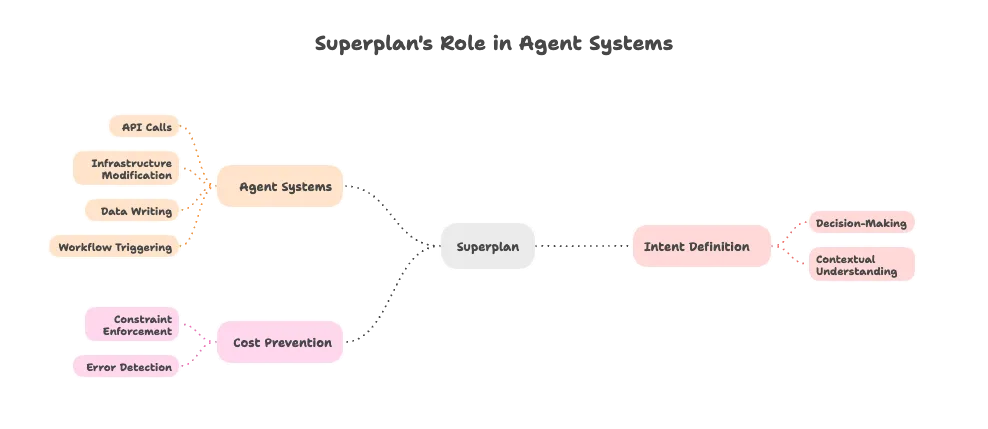

This is why the concept of an AI agent planner is rapidly becoming foundational. A planner is not just another component—it is the control brain that transforms reasoning into reliable action. Platforms like SuperPlan formalize this idea by introducing a dedicated planning substrate that governs how AI agents think, decide, and execute.

This article is a deep dive into AI agent planning, why it matters, how it works, and how a structured planning layer dramatically improves reliability, safety, and trust in autonomous systems.

What Is an AI Agent Planner?

An AI agent planner is a system that defines what an AI agent should do, in what order, under which constraints, and with what verification. Instead of reacting directly to prompts or events, the agent plans before acting.

At its core, an AI agent planner enables:

- Clear intent definition

- Step-by-step execution logic

- Decision checkpoints

- Tool and API coordination

- Continuous feedback and correction

This planner becomes the backbone of AI agent planning and execution.

Why AI Agent Planning Is Essential

The Failure of Prompt-Only Agents

Prompt-driven agents often:

- Guess when unsure

- Skip validation steps

- Hallucinate missing details

- Drift from objectives

- Break silently in production

This is why a formal AI agent planning framework is required. Planning is not a luxury—it is the difference between intelligence and dependability.

AI Agent Planning Framework Explained

An AI agent planning framework provides structure across the entire lifecycle of an agent’s work. It ensures that thinking, acting, and correcting happen in a controlled loop.

Key Capabilities

- Goal interpretation

- Task decomposition

- Dependency tracking

- Tool gating

- Execution monitoring

- Replanning logic

Frameworks like SuperPlan treat planning as a first-class system layer, not an embedded prompt trick.

The AI Agent Planning Layer

The AI agent planning layer sits between reasoning models (LLMs) and execution systems (tools, APIs, workflows). It decides when and whether actions should occur.

This planning layer for AI agents prevents impulsive execution and introduces strategic oversight.

It answers questions like:

Is the goal clear?

Are assumptions verified?

Are prerequisites met?

Is execution safe right now?

Structured Planning for AI Agents

Structured planning for AI agents replaces improvisation with intention. Every action must trace back to a goal and a plan.

This structure:

Reduces uncertainty

Improves reproducibility

Enables auditing

Supports governance

It is especially critical for structured planning for LLM agents operating across long workflows.

AI Agent Execution Planning

Execution is where most agents fail. AI agent execution planning ensures that actions happen only when conditions are satisfied.

Execution planning includes:

Ordering steps correctly

Managing dependencies

Controlling retries

Handling partial failures

This transforms chaotic execution into a predictable pipeline.

AI Agent Decision Planning

Before acting, agents must decide. AI agent decision planning formalizes how choices are made under uncertainty.

It evaluates:

Multiple paths

Risk trade-offs

Confidence thresholds

Resource constraints

This prevents agents from choosing the most fluent answer over the most correct one.

AI Agent Hallucinations: The Real Cause

AI agent hallucinations are rarely just a model problem. They emerge when:

Goals are ambiguous

Steps are skipped

Verification is absent

Execution is rushed

This is why planning is the most effective way to reduce AI hallucinations.

How to Prevent AI Agent Hallucinations

To understand how to prevent AI agent hallucinations, you must stop agents from guessing.

A planning layer:

Forces assumption listing

Requires verification steps

Routes uncertainty to tools

Blocks unsupported claims

This is how teams build AI agents without hallucinations in real systems.

AI Agent Drift and How to Prevent It

AI agent drift occurs when agents slowly deviate from their original goal.

To prevent AI agent drift, planners:

Re-anchor every step to intent

Track goal alignment continuously

Enforce scope boundaries

Drift is a planning failure, not a reasoning failure.

AI Agent Failures in Production

Most AI agent failures in production stem from:

Missing constraints

Tool misuse

Cascading hallucinations

Lack of rollback strategies

A proper planning layer catches these failures before they propagate.

AI Agent Reliability and Governance

Reliability

AI agent reliability comes from predictability. Planning creates repeatable behavior, even under uncertainty.

Governance

AI agent governance requires visibility into decisions. Structured plans make actions auditable and explainable.

AI Agent Execution Framework

An AI agent execution framework is built on top of planning. It ensures:

Safe execution

Controlled retries

Dependency resolution

Error isolation

Execution without planning is reckless automation.

Tool Calling AI Agents

Tool calling AI agents are especially vulnerable to hallucination—calling nonexistent APIs or passing incorrect parameters.

Planning ensures:

Tool capability awareness

Validation before invocation

Post-execution verification

This is essential for AI agents calling APIs in production.

MCP AI Agents and Orchestration

Modern systems increasingly rely on MCP AI agents and distributed coordination.

An AI agent orchestration layer assigns roles, synchronizes timelines, and resolves conflicts across agents.

AI Agent Control Layer

The AI agent control layer governs execution permissions, safety checks, and constraints.

This layer enforces:

Budget limits

Policy compliance

Tool boundaries

AI Agent Safety in Production

True AI agent safety in production is achieved at the planning level, not by post-hoc filters.

Planning prevents:

Unsafe actions

Unauthorized access

Irreversible mistakes

How to Plan AI Agent Execution

To understand how to plan AI agent execution:

Define intent clearly

Decompose tasks

Identify assumptions

Gate execution

Monitor outcomes

Replan as needed

This is planning before AI agent execution in practice.

Why AI Agents Hallucinate in Workflows

Why AI agents hallucinate in workflows is simple: workflows compress reasoning.

Planning expands reasoning safely and deliberately.

The AI Planning Layer and Planning Substrate

An AI planning layer acts as a planning substrate for AI—a shared foundation for reasoning, execution, and correction.

This substrate is model-agnostic and future-proof.

Decision Planning and Intent Definition

Decision planning for AI agents starts with intent definition for AI agents.

If intent is unclear, execution will fail—no matter how powerful the model.

Conclusion

The future of autonomous systems does not belong to bigger models alone. It belongs to planners.

By adopting an AI agent planner and a dedicated planning layer for AI agents, teams can:

Reduce hallucinations

Prevent drift

Improve reliability

Enforce governance

Ship safe production systems

Platforms like SuperPlan demonstrate a simple truth:

AI that plans before acting is AI you can trust.